Seongheon Park

Contact. seongheon_park [at] cs [dot] wisc [dot] edu | CV

1210 W Dayton St

Madison, WI 53706

Hello! I’m a second-year PhD student in the Computer Sciences department at the University of Wisconsin-Madison, where I am fortunate to be advised by Prof. Sharon Li. Previously, I completed my MS degree at Yonsei University in the Electrical and Electronic Engineering department under the supervision of Prof. Kwanghoon Sohn and Prof. Kibok Lee.

My research focuses on making foundation models (LLMs, LVLMs, and VLAs) safe and reliable in real-world deployment. Specifically, I study why and how these models fail and how to monitor and correct them through:

- Error detection and mitigation (e.g., hallucination)

- Failure reasoning

- Latent space interpretability

These are crucial for preventing catastrophic failures in human-facing and industrial applications, and for enabling robust agentic systems to stop, replan, and incorporate human intervention in an interpretable way. I am also broadly interested in multimodal models and post-training.

news

| May 18, 2026 | I will be joining Microsoft Research Asia-Tokyo this May as a research intern, working on Embodied AI agents |

|---|---|

| Apr 14, 2026 | I have passed my PhD qualifying exam! |

| Apr 06, 2026 | Two papers have been accepted to ACL 2026! |

| Jan 18, 2026 | Excited to share that our paper has been accepted at ICLR 2026! |

| Sep 18, 2025 | Happy to share that two of our papers have been accepted at NeurIPS 2025 |

publications

- ACL (Main)

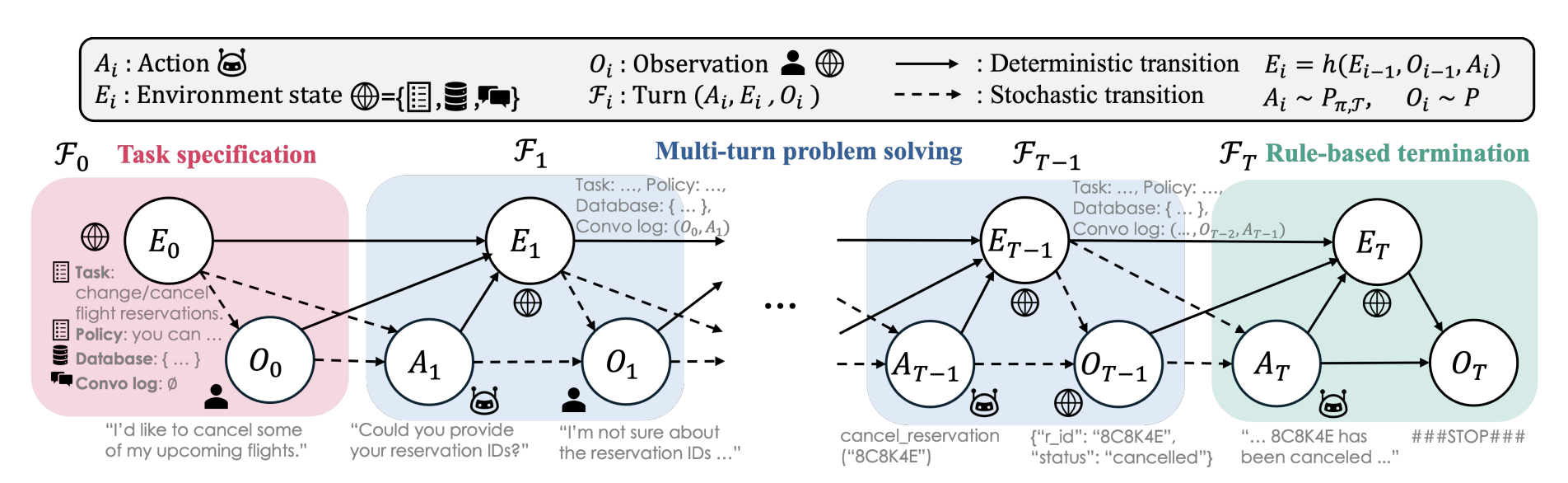

Uncertainty Quantification in LLM Agents: Foundations, Emerging Challenges, and OpportunitiesAnnual Meeting of the Association for Computational Linguistics, Feb 2026

Uncertainty Quantification in LLM Agents: Foundations, Emerging Challenges, and OpportunitiesAnnual Meeting of the Association for Computational Linguistics, Feb 2026 - ACL (Findings)

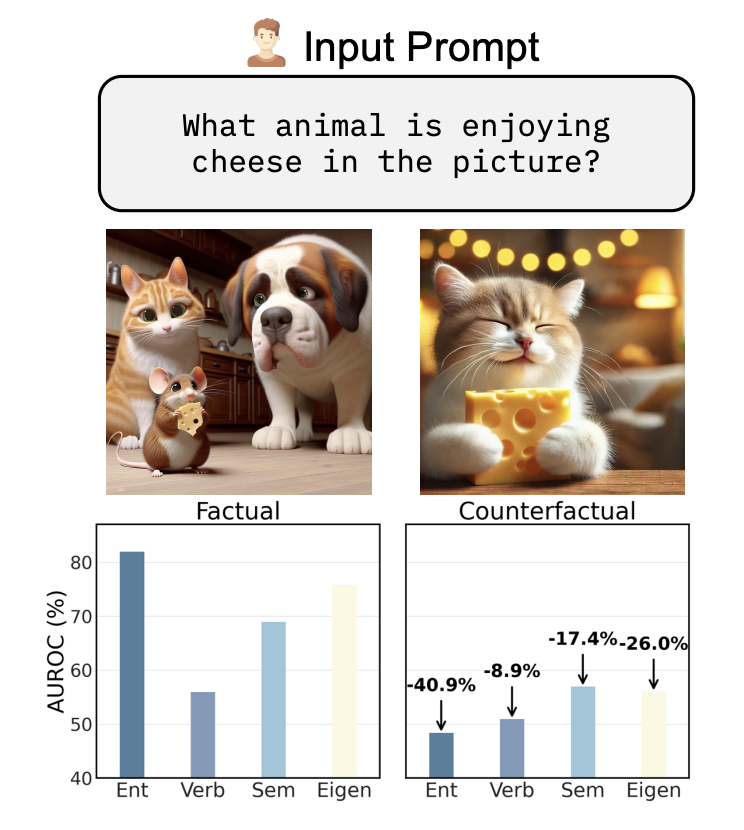

VAUQ: Vision-Aware Uncertainty Quantification for LVLM Self-EvaluationAnnual Meeting of the Association for Computational Linguistics, Jan 2026

VAUQ: Vision-Aware Uncertainty Quantification for LVLM Self-EvaluationAnnual Meeting of the Association for Computational Linguistics, Jan 2026 - ICLR

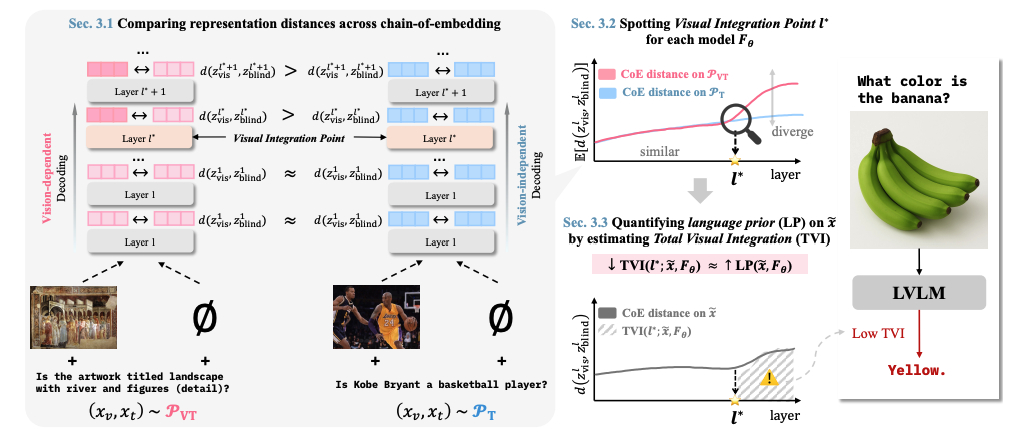

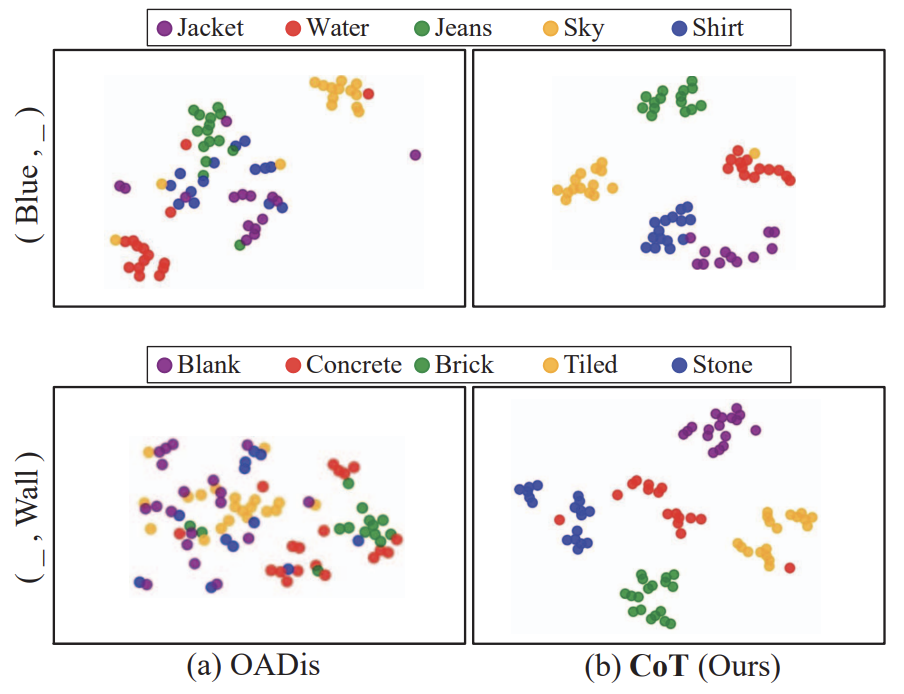

Understanding Language Prior of LVLMs by Contrasting Chain-of-EmbeddingInternational Conference on Learning Representations, Jan 2026

Understanding Language Prior of LVLMs by Contrasting Chain-of-EmbeddingInternational Conference on Learning Representations, Jan 2026 - Preprint

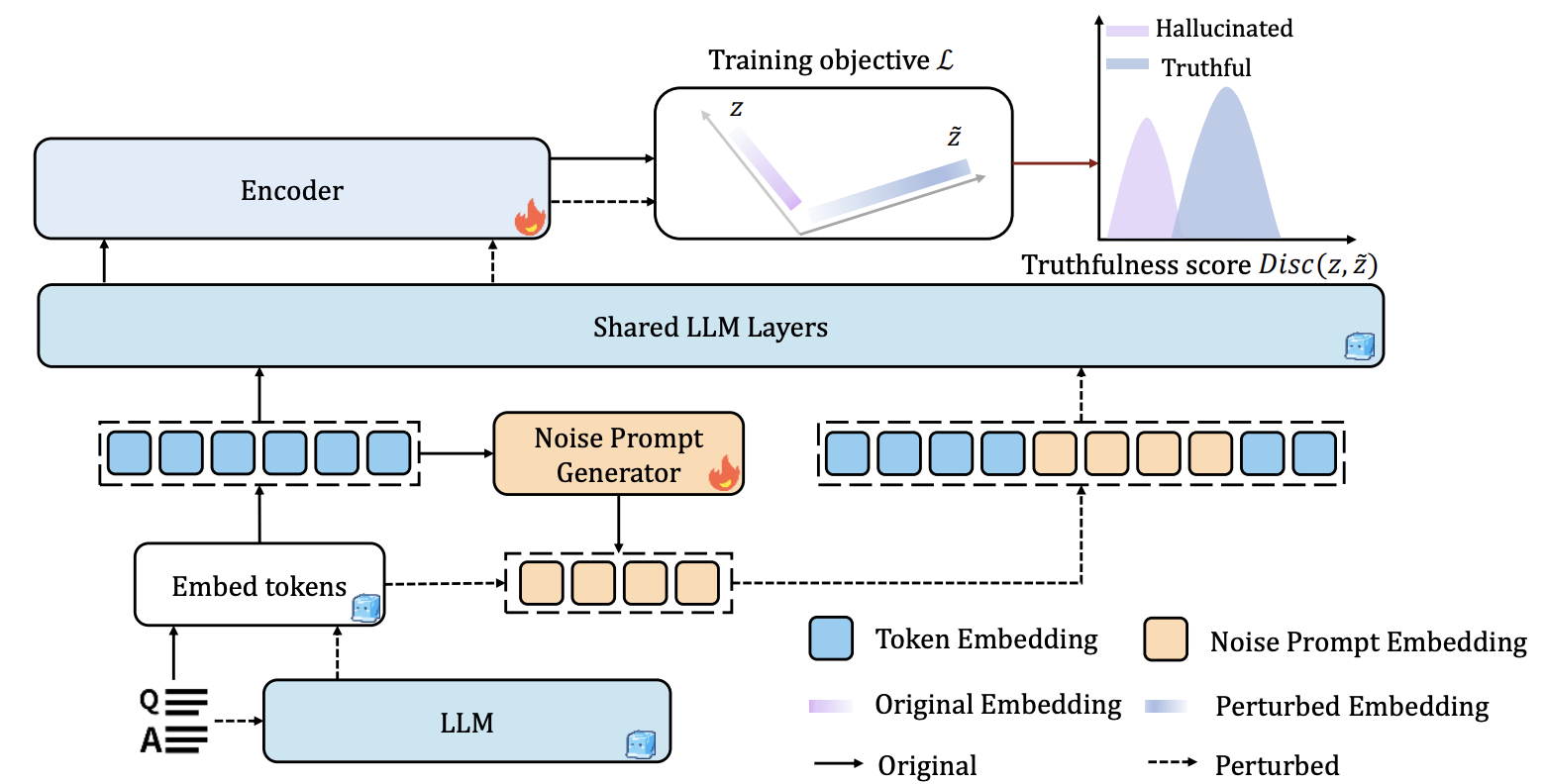

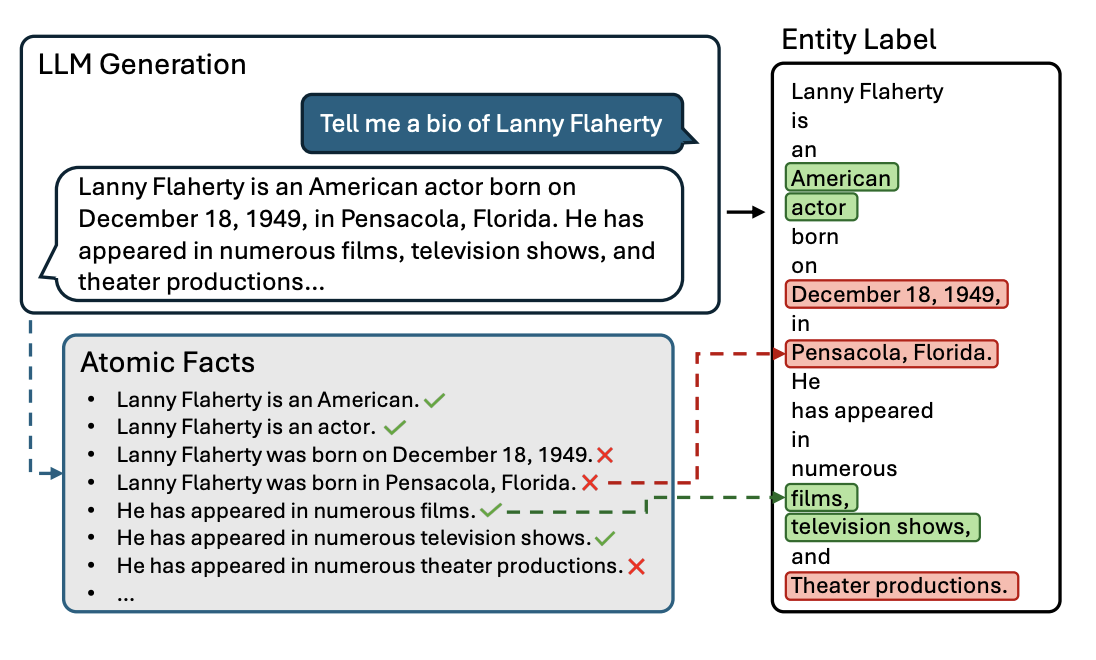

Shaking to Reveal: Perturbation-Based Detection of LLM HallucinationsarXiv:2506.02696, Jun 2025

Shaking to Reveal: Perturbation-Based Detection of LLM HallucinationsarXiv:2506.02696, Jun 2025 - NeurIPS

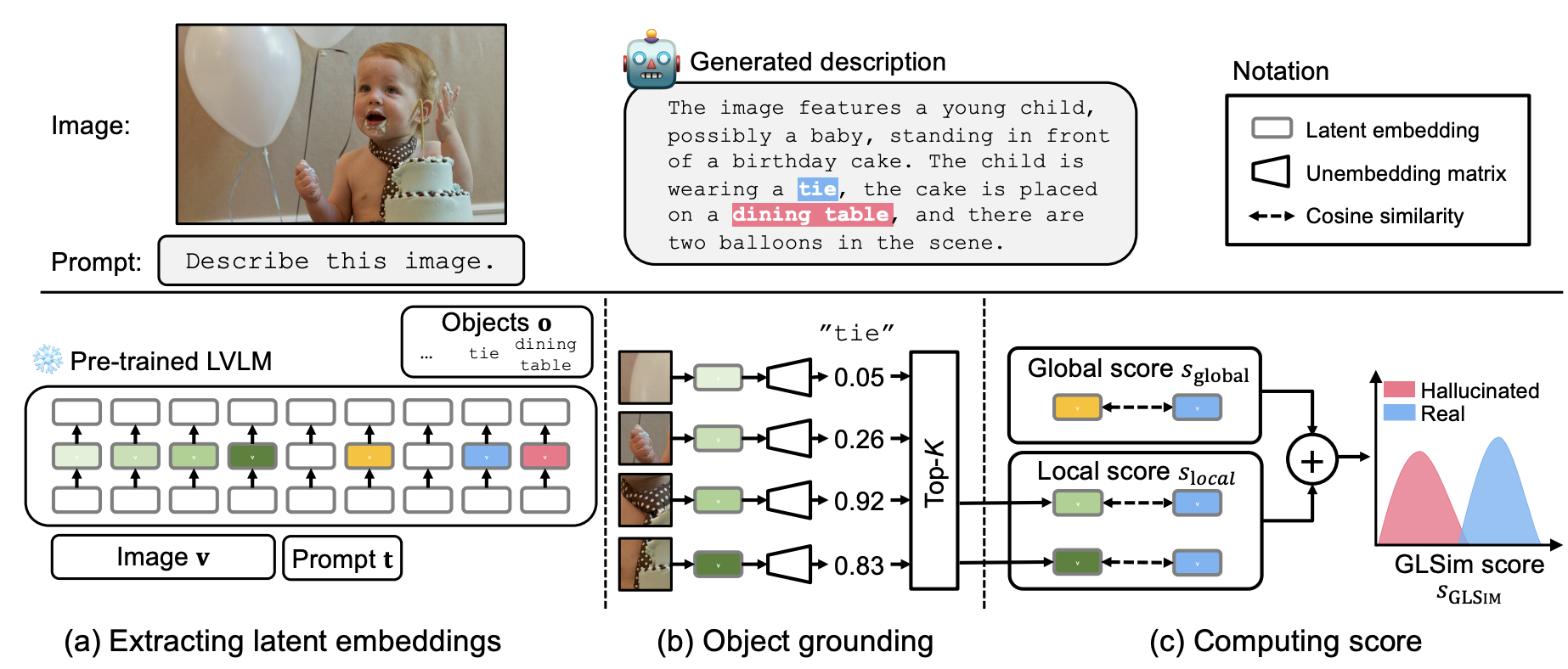

GLSim: Detecting Object Hallucinations in LVLMs via Global-Local SimilarityConference on Neural Information Processing Systems, Jul 2025

GLSim: Detecting Object Hallucinations in LVLMs via Global-Local SimilarityConference on Neural Information Processing Systems, Jul 2025 - NeurIPS

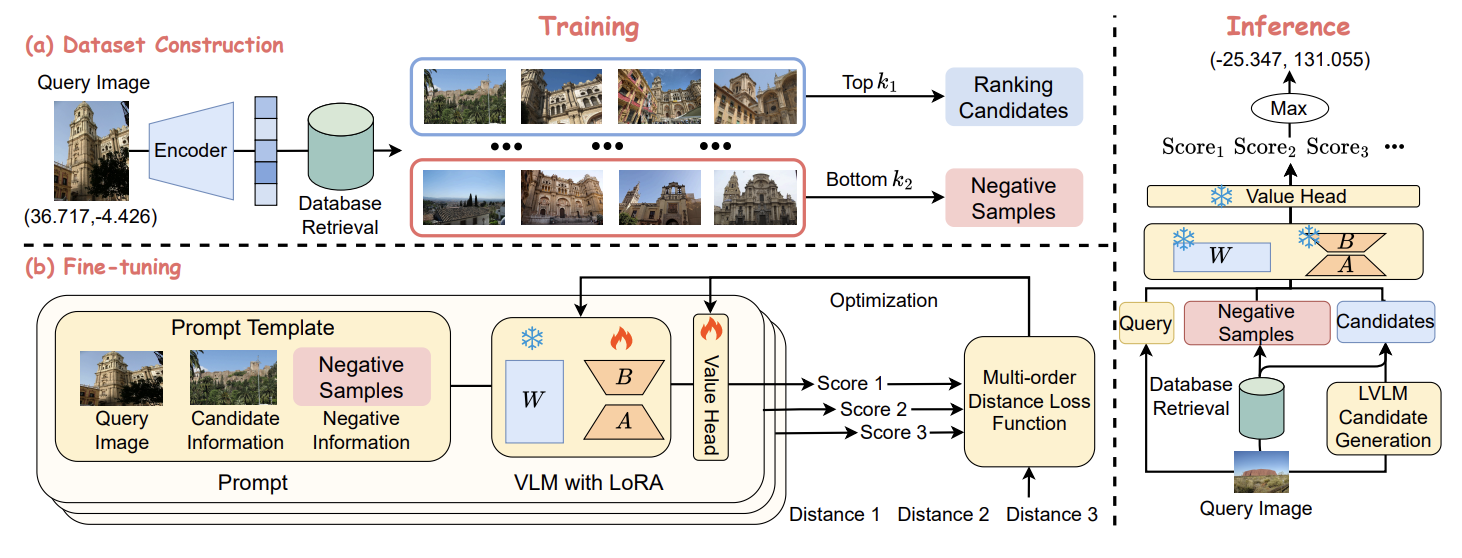

GeoRanker: Distance-Aware Ranking for Worldwide Image GeolocalizationConference on Neural Information Processing Systems, May 2025

GeoRanker: Distance-Aware Ranking for Worldwide Image GeolocalizationConference on Neural Information Processing Systems, May 2025 - ICML

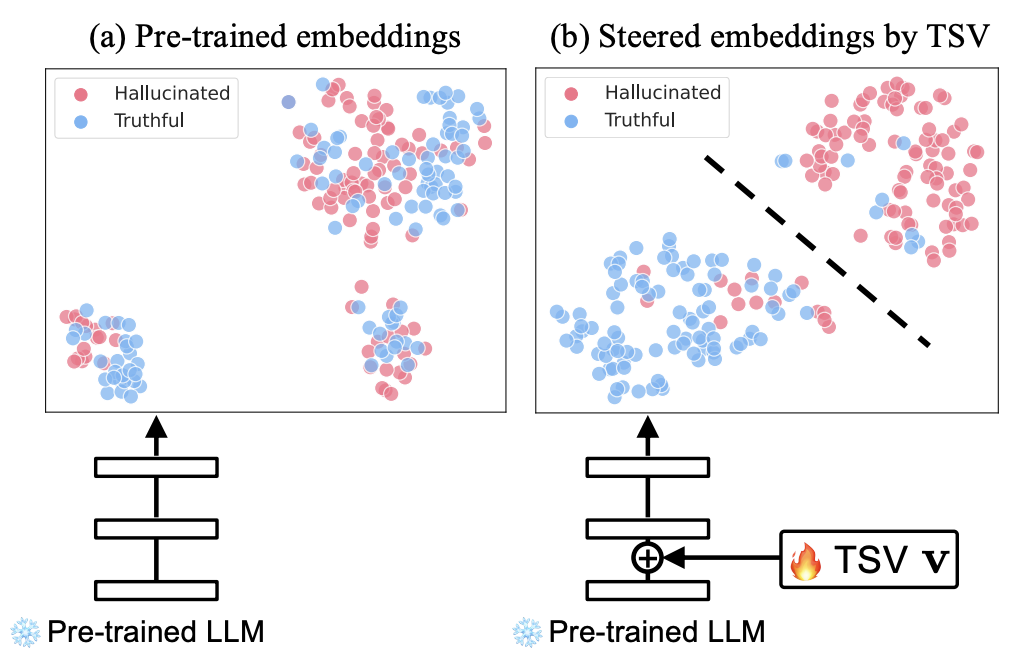

Steer LLM Latents for Hallucination DetectionInternational Conference on Machine Learning, May 2025

Steer LLM Latents for Hallucination DetectionInternational Conference on Machine Learning, May 2025 - ICML

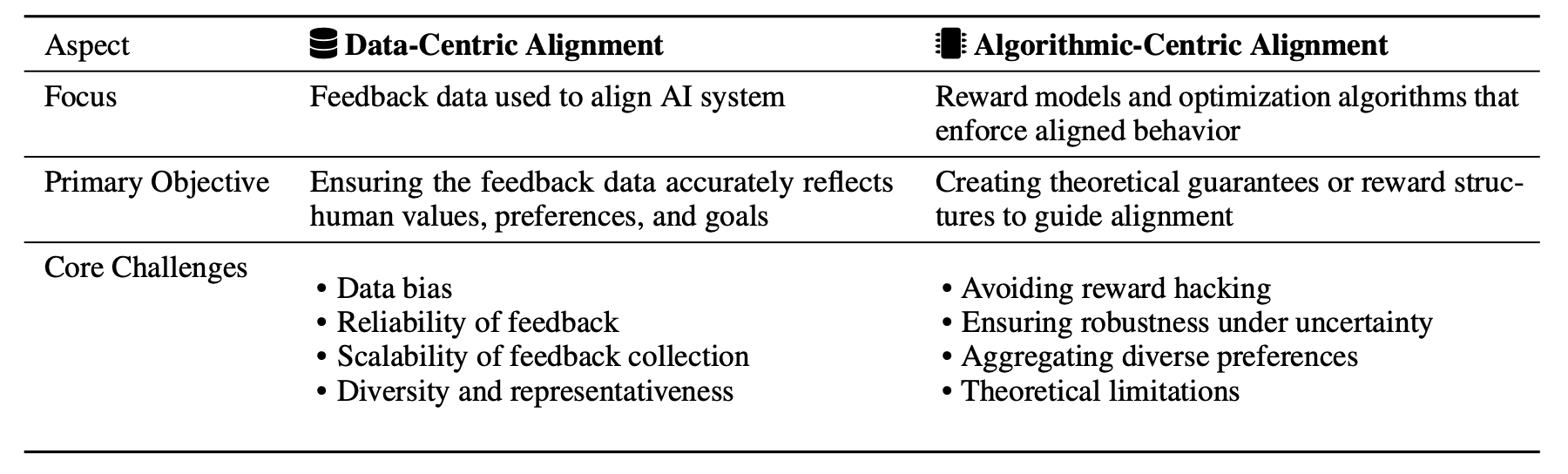

Position: Challenges and Future Directions of Data-Centric AI AlignmentInternational Conference on Machine Learning, May 2025

Position: Challenges and Future Directions of Data-Centric AI AlignmentInternational Conference on Machine Learning, May 2025 - TMLR

HalluEntity: Benchmarking and Understanding Entity-Level Hallucination DetectionTransactions on Machine Learning Research, Mar 2025

HalluEntity: Benchmarking and Understanding Entity-Level Hallucination DetectionTransactions on Machine Learning Research, Mar 2025 - CVPR Workshop

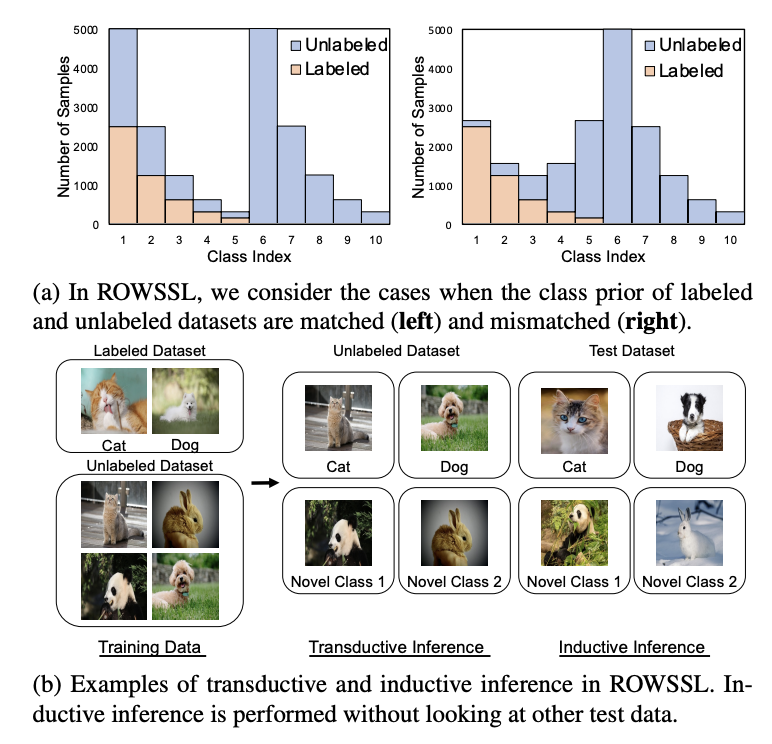

Rethinking Open-World Semi-Supervised Learning: Distribution Mismatch and Inductive InferenceCVPR Workshop on Computer Vision in the Wild, Jun 2024

Rethinking Open-World Semi-Supervised Learning: Distribution Mismatch and Inductive InferenceCVPR Workshop on Computer Vision in the Wild, Jun 2024 - ICCV

Hierarchical visual primitive experts for compositional zero-shot learningIEEE/CVF International Conference on Computer Vision, Oct 2023

Hierarchical visual primitive experts for compositional zero-shot learningIEEE/CVF International Conference on Computer Vision, Oct 2023 - CVPR

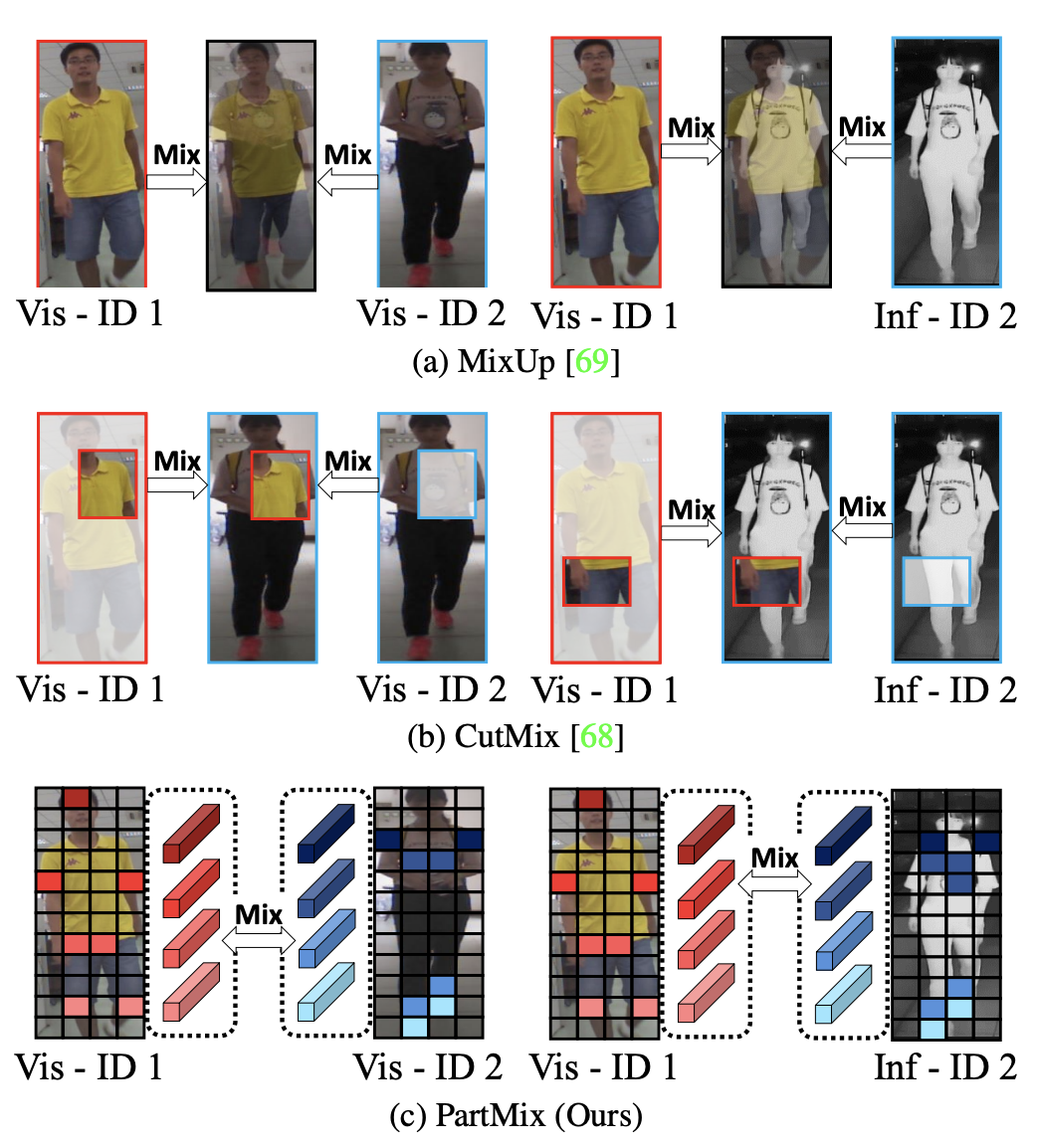

Partmix: Regularization strategy to learn part discovery for visible-infrared person re-identificationIEEE/CVF Conference on Computer Vision and Pattern Recognition, Jun 2023

Partmix: Regularization strategy to learn part discovery for visible-infrared person re-identificationIEEE/CVF Conference on Computer Vision and Pattern Recognition, Jun 2023 - WACV

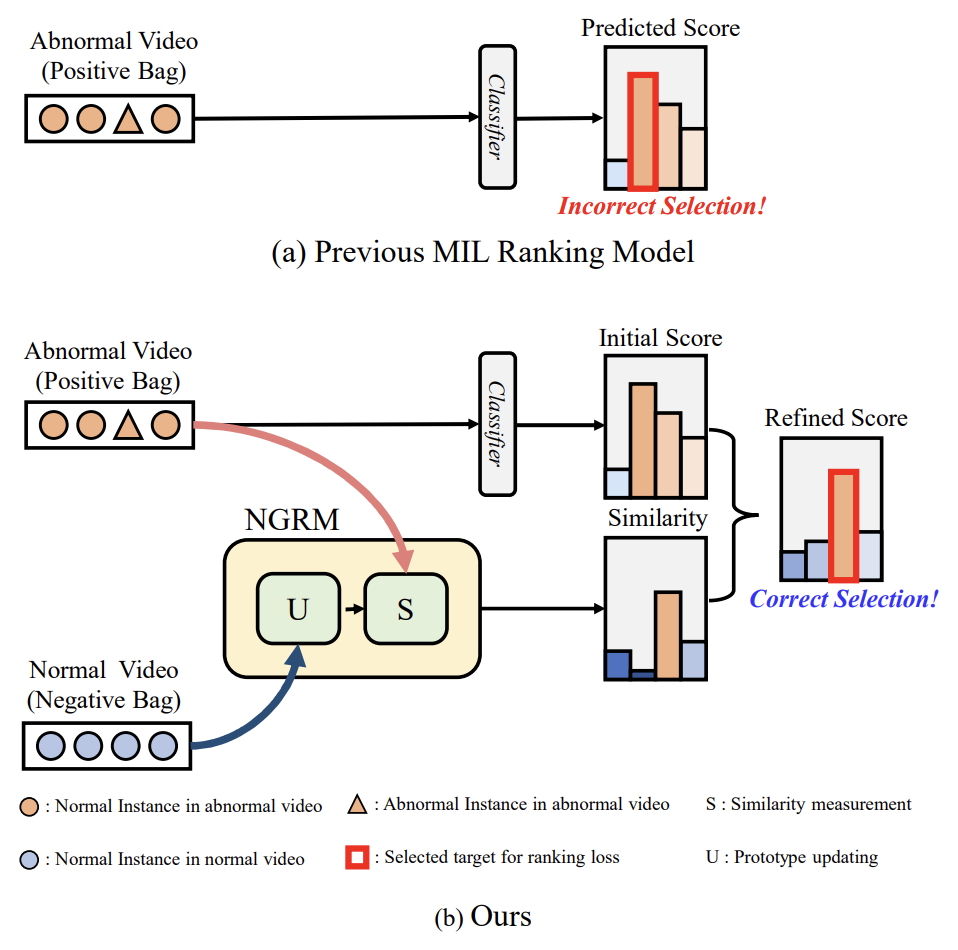

Normality guided multiple instance learning for weakly supervised video anomaly detectionIEEE/CVF Winter Conference on Applications of Computer Vision, Jan 2023

Normality guided multiple instance learning for weakly supervised video anomaly detectionIEEE/CVF Winter Conference on Applications of Computer Vision, Jan 2023 - WACV

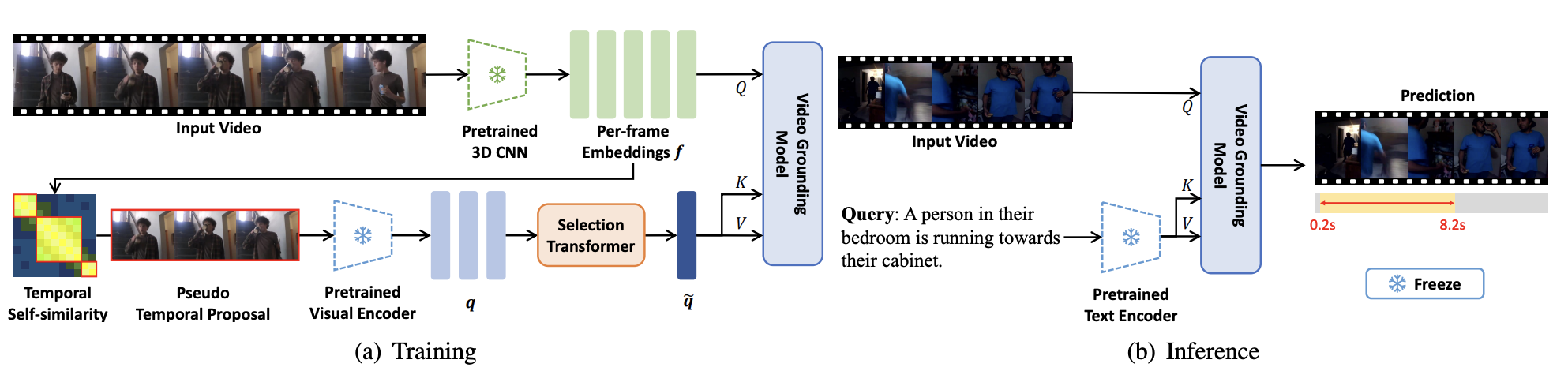

Language-free training for zero-shot video groundingIEEE/CVF Winter Conference on Applications of Computer Vision, Jan 2023

Language-free training for zero-shot video groundingIEEE/CVF Winter Conference on Applications of Computer Vision, Jan 2023